Software collaboration keeps evolving. With Continuous AI, GitHub Next are exploring how LLMs can help teams with documentation, quality, triage, and more. Agentic Workflows is a follow‑up exploration: a research demonstrator focused on expressing repository‑level behaviors in natural language and running them on GitHub. Agentic Workflows is not a product and not even a technical preview; it’s a vehicle for learning, exploring the agentic design space, and mapping what works (and what doesn’t) in day‑to‑day repositories.

Explore our docs and examples at

githubnext.github.io/gh-aw

Contribute to our implementation at

github.com/githubnext/gh-aw

What are Agentic Workflows?

Agentic Workflows are a form of natural language programming over GitHub. Instead of writing bespoke scripts that operate over GitHub using the GitHub API, you describe the desired behavior in plain language. This is converted into an executable GitHub Actions workflow that runs on GitHub using an agentic “engine” such as Claude Code or Open AI Codex. It’s a GitHub Action, but the “source code” is natural language in a markdown file.

Critically, agentic workflows are compiled to existing GitHub Actions workflows (YAML). In this research

demonstrator, you use the gh aw GitHub CLI extension to perform a

short, simple, manual compile step to generate the Actions workflow. In the future, we envisage

that it may be possible to simply commit a markdown file to .github/workflows, and agentic things “start

happening.” Just like YAML files on GitHub Actions today.

The design of Agentic Workflows takes an “Actions-first” approach to the agentic design space. This means the design aligns with familiar concepts from GitHub Actions: repo‑centric execution, team‑visible logs, permissions, partial sandboxing of execution, secrets, environments, triggers, security controls, and job semantics stay as with GitHub Actions. Because Agentic Workflows build on top of GitHub Actions rather than around it, the organization can audit the workflows, version them, and reuse established tools and patterns. Agentic Workflows provide a clearer, more declarative way to express what some users are already doing on GitHub Actions today.

A minimal example

Below is a minimal agentic workflow that keeps documentation up to date. This is just a simple example to illustrate the syntax; a more complete example is available in the examples repository. This is a demonstrator sample and is not for production use.

---

on:

push:

branches: [main]

permissions:

contents: read

pull-requests: read

safe-outputs:

create-pull-request:

tools:

edit:

web-fetch:

web-search:

---

# Documentation Updater

You are a technical writer. Your job is to make the documentation in the repository ${{ github.repository }} _excellent_.

Steps:

1. Analyze repository changes. On every push to the main branch, examine the diff to identify changed/added/removed entities.

2. Review existing documentation for accuracy and completeness. Identify documentation gaps including missing or outdated sections.

3. Update documentation as necessary.

4. Create a pull request with a clear description of the changes.Note that the triggers, permissions, outputs and tools are all made clear and

explicit in the definition of the workflow. For triggers: and permissions:,

the exact GitHub Actions specifications you are already familiar with are used.

The safe-outputs: feature gives highly controlled “write” access back to GitHub - in this

example, at most one new pull request can be created, and the coding agent itself

does not have “write” permission. For tools: a new declaration form is used. This

is followed by the agentic instructions.

Design philosophy

-

GitHub‑native. Agentic Workflows assume access to the GitHub ecosystem, including MCP‑based tools and GitHub‑specific conveniences like ai-reaction, automatic upload of logs, and built‑in job control/reporting.

-

Model and engine independence. An agentic workflow is largely independent of the underlying LLM and the coding agent. You shouldn’t have to rewrite your workflows to move from Claude Code to Codex.

-

Actions alignment. Repo‑centric, team‑visible, auditable. We inherit Actions’ ergonomics and compile down to Actions YAML so everything remains transparent and source‑controlled.

-

Shareable pieces. You can publish and consume packages of workflows, as well as share tool specifications, so teams can standardize and remix.

-

Security first. We build on Actions’ existing security model, and add additional guardrails to keep agentic steps safe, readonly and reviewable.

-

You’re in control. There are no hidden prompts or secret sauce. You can inspect the generated YAML and own the behavior end‑to‑end.

Multi‑engine by design

Agentic Workflows are engine‑neutral. They support multiple coding agents, including Claude Code and OpenAI Codex. Because the natural‑language “program” is decoupled from the engine, you get portability: keep your workflow, swap your engine, compare results.

Where natural language shines

Natural‑language programming works best where a bit of productive ambiguity helps. A human teammate understands instructions like “read the coverage report and write tests to improve coverage.” That’s not a line‑by‑line spec, but it is a useful direction for an agent to take initiative. Agentic Workflows are built to express those kinds of intentions cleanly, and then run them safely in a team context with logs, guardrails, and review.

How it fits with Continuous AI

Continuous AI is our umbrella project opening the door for the industry to a new era of AI‑enriched automation in software collaboration. Agentic Workflows are one concrete demonstrator of how some of that workload can be implemented on GitHub today. If Continuous AI is the category, Agentic Workflows are one way of doing it, focusing on clarity, control, and reuse.

What can be built with Agentic Workflows

We’re especially excited about use cases that help open‑source maintainers and team health:

-

Issue triage and labeling. Keep the queue tidy, summarize conversations, route work, and suggest next actions.

-

Continuous QA. Propose targeted test cases, run selective checks, and file PRs that improve robustness.

-

Accessibility review. Continuously scan code and docs, raise actionable issues, and open PRs with suggested fixes.

-

Continuous documentation. Improve README fragments and API docs as code evolves; nudge authors when explanations drift.

-

Continuous test improvement. Suggest new tests based on coverage reports, and code changes.

These are the important (and unglamorous) tasks that help projects scale. They’re also ideal for agentic automation because they’re repetitive, collaborative, auditable, and cannot be expressed as simple heuristics — they benefit from a touch of judgment and careful fitting into the team’s workflow.

We’ve created a number of demonstrator workflows to illustrate these use cases and published them at github.com/githubnext/agentics. These examples show how to automate common tasks, such as triaging issues, improving documentation, and enhancing code quality. They serve as a reference point for teams to explore how agentic natural language programming is relevant to their own repositories.

Built on what you already use

Agentic Workflows stand on the shoulders of the ecosystem: GitHub Actions, MCP‑enabled tools, and AI providers. We embrace the community around LLM frameworks, tools, and actions. Agentic Workflows don’t replace those pieces, they compose them into something easier to author, share, and reason about from inside your repo.

Security and safety

Security is foundational — Agentic Workflows inherit GitHub Actions’ sandboxing model, scoped permissions, and auditable execution. The attack surface of agentic automation can be subtle (prompt injection, tool invocation side‑effects, data exfiltration), so we bias toward explicit constraints over implicit trust: least‑privilege tokens, allow‑listed tools, and execution paths that always leave human‑visible artifacts (comments, PRs, logs) instead of silent mutation.

A core reason for building Agentic Workflows as a research demonstrator is to closely track emerging security features in coding agents. With Agentic Workflows we can assess these features under near‑identical inputs, so differences in behavior and guardrails are comparable. Additionally, the “compilation” process of Agentic Workflows enforces additional security checking suitable for the GitHub Actions context, for example highly restricted context substitutions. We are in active conversations with providers of coding agents to increase and improve the security-related offerings available in the engines. Alongside engine evolution, we are working on our own additional mechanisms including MCP proxy filtering, container-configuration and hooks‑based security checks that can veto or require review before effectful steps run.

We aim for strong, declarative guardrails — clear policies the workflow author can review and version — rather than opaque heuristics. Lock files are fully reviewable so teams can see exactly what was resolved and executed. This will keep evolving; we would love to hear ideas and critique from the community on additional controls, evaluation methods, and red‑team patterns.

Prior work

Agentic Workflows build on what we learned in earlier GitHub Next projects. It’s a descendant of SpecLang, which explored end‑to‑end development from a structured, Markdown‑like “spec” that serves as the program’s source of truth. It’s also inspired by Copilot Workspace, where we frequently discussed the need to continuously automate tasks like documentation and test improvement.

We use both Claude Code and Codex directly.

We have been inspired by Claude Code GitHub Actions and the

SuperClaude Framework. SuperClaude includes the convenient @include syntax for composing

Markdown prompts from reusable fragments. That idea of modular, shareable

building blocks shows up in Agentic Workflows’ design for sharing tool specs and workflow

components.

We were also directly inspired by Agentic Workflow Definitions project by @danielmeppiel, which informed parts of this project’s premise and design.

Why a research demonstrator (and not a product)?

Because we are still learning. There are sharp edges around resource limits, tool reliability, evaluation, and safety. We’re optimistic that these seams will tighten, perhaps one day something like Agentic Workflows are integrated directly into GitHub.com, but we’re not there yet. Shipping a research demonstrator facilitates conversations with providers of coding agents, product designers, and the GitHub Community. This lets us learn out in the open with you: what abstractions stick, what stays portable across engines, and what governance and UX patterns teams actually need.

Get involved

-

Explore the repos: github.com/githubnext/gh-aw; examples at github.com/githubnext/agentics.

-

Read the README for setup details, examples, and the design rationale.

-

Try a workflow in a test repo. Start small, keep the agentic step focused, and wire it to a low‑risk trigger.

-

Share feedback on what’s confusing, what’s missing, and what patterns you’d like to reuse across repos. Please file issues in github.com/githubnext/gh-aw and chat with us in the Continuous AI channel in the Next Discord server.

Agentic Workflows is an invitation to help invent the next layer of repo automation, one that’s conversational, auditable, and works the way teams actually collaborate. We’re excited to explore with you.

Related Posts

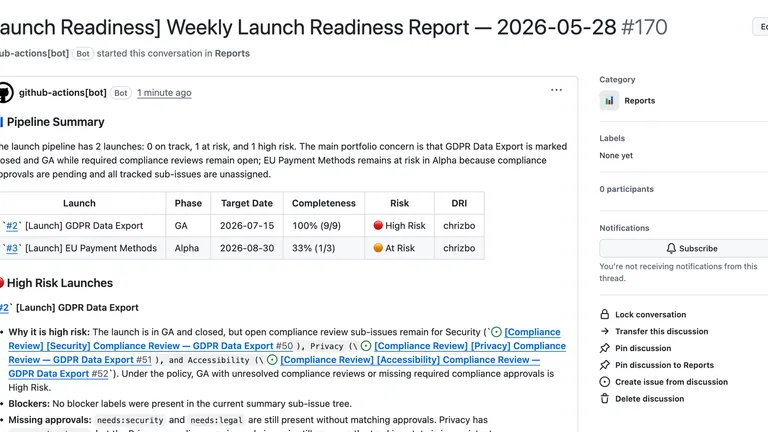

Agentics Beyond Code

What happens when you give PMs, compliance teams, and leaders their own agents? A tour of Agentics Beyond Code — an open-source set of GitHub Agentic Workflows for the non-engineering roles that ship, govern, and operate products.

Canary: a harm gate for agentic systems

Canary puts a small, auditable gate in front of agentic workflows so untrusted artifacts are classified before powerful agents act on them.

The Impact of Automated Repository Maintenance Assistance

https://github.com/githubnext/repo-assist-impact/blob/main/report.md

What happens when a proactive AI repository agent is deployed across 13 open source repositories? 578 issues closed, median 8x increase in issue closure velocity, and 10x in PR merge velocity — transforming largely dormant projects into actively maintained ones. The single most important factor? The rate at which human maintainers decide to act.

Agents are power tools

A practical mental model for agents, workflows, and human-machine systems in agentic engineering.

Agency is the New Resilience

Agents can power robust workflows by intelligently reacting to unexpected conditions, creating a new form of flexible resilience.

Understanding Repositories as Human/Agent Knowledge Factories

https://dsyme.net/2026/05/05/understanding-repositories-as-human-agent-knowledge-factories-%f0%9f%9a%80/

How do you maintain team velocity when AI-generated code needs cleanup? You have two choices: slow everyone down with more review hurdles, or let automated agentic processes clean things up after the fact. The second path is the key to velocity — and it’s now practical with repository automation.

Lean Squad: Exploring Automated Software Verification with Near-Zero Human Labour

https://dsyme.net/2026/04/20/lean-squad-automated-software-verification-with-near-zero-human-labour/

What if formal verification could be fully automated — from researching the codebase, to writing specifications, to proving theorems in Lean 4 — all with near-zero human involvement? Lean Squad is a GitHub Agentic Workflow that does exactly this. Applied to three real-world codebases, it produced over 1,200 machine-checked theorems and found real bugs in a drone autopilot.

Start Your Day With Code That’s Better

https://dsyme.net/2026/03/08/start-your-day-with-code-thats-better/

What if you woke up every morning to find your repositories a little bit better than when you left them? A performance improvement here, a feature analysis there, an engineering upgrade you didn’t know was possible. That’s what automated repository maintenance with Repo Assist looks like in practice.

Adding Weighted Task Selection to a GitHub Agentic Workflow

https://dsyme.net/2026/03/07/adding-weighted-task-selection-to-a-github-agentic-workflow/

How should an automated repository assistant decide what to work on next? Round-robin treats every task as equally important regardless of repo state. A weighted approach means the agent now does the right thing more often: when there’s a mountain of unlabelled issues it labels, when the backlog is clear it invests in engineering.

Repo Assist: Crunching the Technical Debt with GitHub Agentic Workflows

https://dsyme.net/2026/02/25/repo-assist-a-repository-assistant/

Can automated repository assistants help maintainers re-engage with stale repositories weighed down by years of technical debt? Repo Assist uses GitHub Agentic Workflows to label issues, answer questions, propose fixes, and make engineering improvements — all while the maintainer stays in control through pull request review.

Automate repository tasks with GitHub Agentic Workflows

https://github.blog/ai-and-ml/automate-repository-tasks-with-github-agentic-workflows/

Coding agents bring new, magical powers to repository automation — and we believe developers, teams and communities should be empowered to shape their use according to their own needs, goals and responsibilities. Our new post on the GitHub Blog introduces GitHub Agentic Workflows as a third leg to augment CI/CD: Continuous AI.

Generative AI and Changing Inputs

https://dsyme.net/2026/01/27/generative-ai-and-changing-inputs/

Every AI feature that generates documentation, synthesizes specifications, or discovers build rules must deal with changing inputs. But when things change, competing goals emerge: freshness, stability, convergence, performance. How do we build incremental AI functions that balance these tradeoffs?

Towards Semi-automatic Agentic Performance Engineering

https://dsyme.net/2025/10/12/towards-semi-automatic-performance-engineering/

Performance engineering is stunningly hard and heterogeneous — every major piece of software is a cornucopia of delight and a vast swamp of complexity. What if coding agents could walk up to arbitrary software repositories and perform realistic, useful performance work? Sometimes it works. Sometimes it’s delusional. But the impossible is gradually revealing itself to be just partially tractable.

What Kind of Programming is Natural Language Programming?

https://dsyme.net/2025/09/02/what-kind-of-programming-is-natural-language-programming/

Natural language programming is more akin to constraint programming than to traditional precise programming. What we call ambiguity is often genuinely useful generality — and the art is often in specifying less, not more. So what kinds of natural language programming are viable, and what are the limits?

On Continuous AI for Test Improvement

https://dsyme.net/2025/08/27/on-continuous-test-improvement/

Better testing means better software. Within an hour of trialling the Daily Test Coverage Improver on three of the most popular libraries on the planet, multiple PRs improving test coverage were ready. Can Continuous AI finally help the tech industry pay off 50 years of testing debt?

On Natural Language Programming

https://dsyme.net/2025/08/27/on-natural-language-programming/

Dijkstra’s Ghost and the End of The Symbolic Supremacy. As of 2025, there is serious trouble in the kingdom of precise programming: well-written natural language is now sufficient to act as instructions for repeatedly guiding computers to achieve human-relevant tasks. Is it time to replace The Symbolic Supremacy with The Clarity Supremacy?